Since my last heads-up considering my project about a quadcopter for full autonomous indoor 3D reconstruction, I implemented a simple start and landing routine to make the first step toward full autonomous navigation. To achieve a smooth start of the motors, the autonomous takeoff routine starts with a set point 4 meters below ground and rises up to the actual scanning height. Landing follows the opposite approach with an additional step at 20 centimeters height to avoid crash landing.

Adding a first simple trajectory that consists of the start routine, followed by an arc around a point (center of the object you want to scan) and the landing routine, the quadcopter can now already reconstruct simple objects.

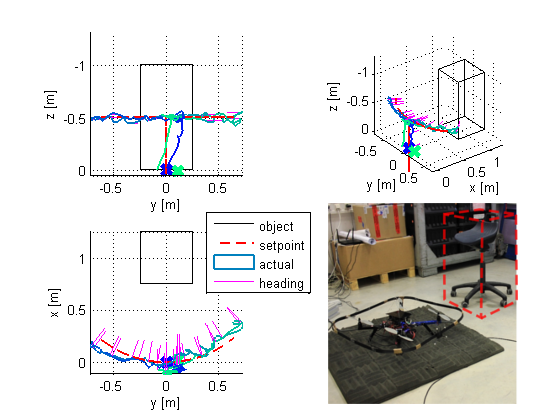

Trajectory tracking deviation. Dashed lines are the set points, and the solid lines are the actual coordinates.

By plotting the same data in 3D the trajectory becomes visible.

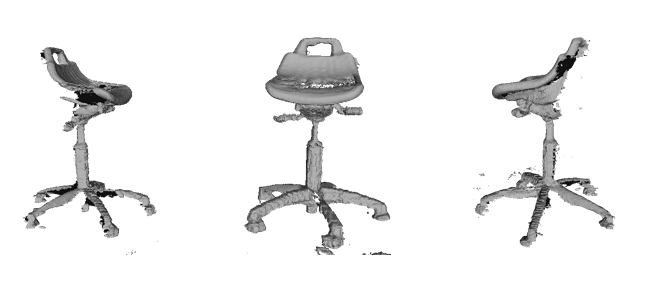

The arc shaped set point trajectory and the actual trajectory are clearly visible. The magenta colored lines show the heading of several intermediate points (should point to the center of the arc). As a benefit ReconstructMe SDK outputs the full reconstruction of the chair used for tracking.

I also made a video of such an experimental run so you can see the full autonomous quadcopter for indoor 3D reconstruction in action

hello sir Christoph,

I’m form Florida tech in the aerospace engineering Lab and we are really impressed and optimistic about the STRUCTURE Sensor.

We already have a couple of them working on some dynamic slosh scanning. I just saw you work and the video (witch I found really impressing) and I wanted to know if you can give me some indication on how to get the trajectory of the the sensor in real time?

Thank you in advance

best regards

Mehdi

Hi,

you need to track the camera movement. This can be done either via input data or external sensors or a combined approach. Look for some registration algorithms or search for visual odometry.

Hi ,I read your article on https://pixhawk.org/_media/firmware/apps/attitude_estimator_ekf/ekf_excerptmasterthesis.pdf .

However it is not complete

I had searched for a long time ,but I can only get this http://www.robotik.jku.at/education/diplomarbeiten/da_huber_gerold/Poster_Huber_Gerold.pdf

Could you please send the whole article to me?

Thanks !

Hi why,

I actually don’t have it at hands. Will try to reach Gerold so he can make it publicly available.

Best,

Christoph

Hey,

Even I’m trying to get a read of the thesis. Any way to get access to it?

Thanks

Akshay